Stop Guessing, Start Testing: A/B Testing AI Prompts for Maximum Impact

Large Language Models (LLMs) are powerful, but getting the right output isn’t always easy. A slight tweak to a prompt can dramatically change the results. Instead of relying on intuition, what if y...

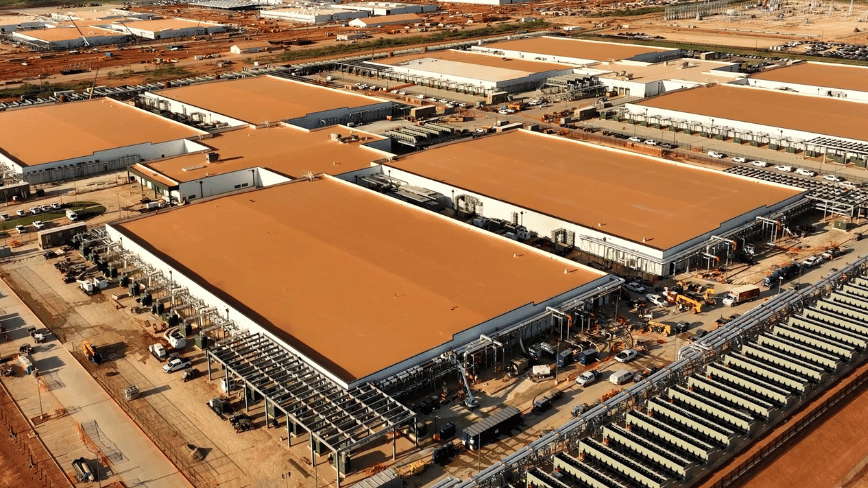

Source: DEV Community

Large Language Models (LLMs) are powerful, but getting the right output isn’t always easy. A slight tweak to a prompt can dramatically change the results. Instead of relying on intuition, what if you could systematically test different prompts and let data decide which performs best? That’s the power of A/B testing prompts in production. This article dives into how to implement this crucial practice, leveraging cutting-edge technologies like Edge Runtimes, Ollama, Transformers.js, and WebGPU to optimize your AI applications. The Problem with Prompt Engineering (and Why A/B Testing is the Solution) Imagine you’re a chef perfecting a signature dish. You try a new spice blend, but aren’t sure if customers will love it. Do you immediately switch the entire menu? No! You’d likely offer both versions to different customers and track which one gets better reviews. Prompt engineering is similar. It’s the art of crafting instructions for LLMs. But unlike traditional software where the same inpu